Taking a drug to first-in-human trials in a bespoke device for targeted intranasal delivery

Featured in ONdrugDelivery, Mark Allen, Andrew Fiorini, and Shai Assia discuss the need to develop delivery devices early when formulating nasally delivered drugs for systemic and local action, and a method by which the route to clinic can be made easier, faster and cheaper.

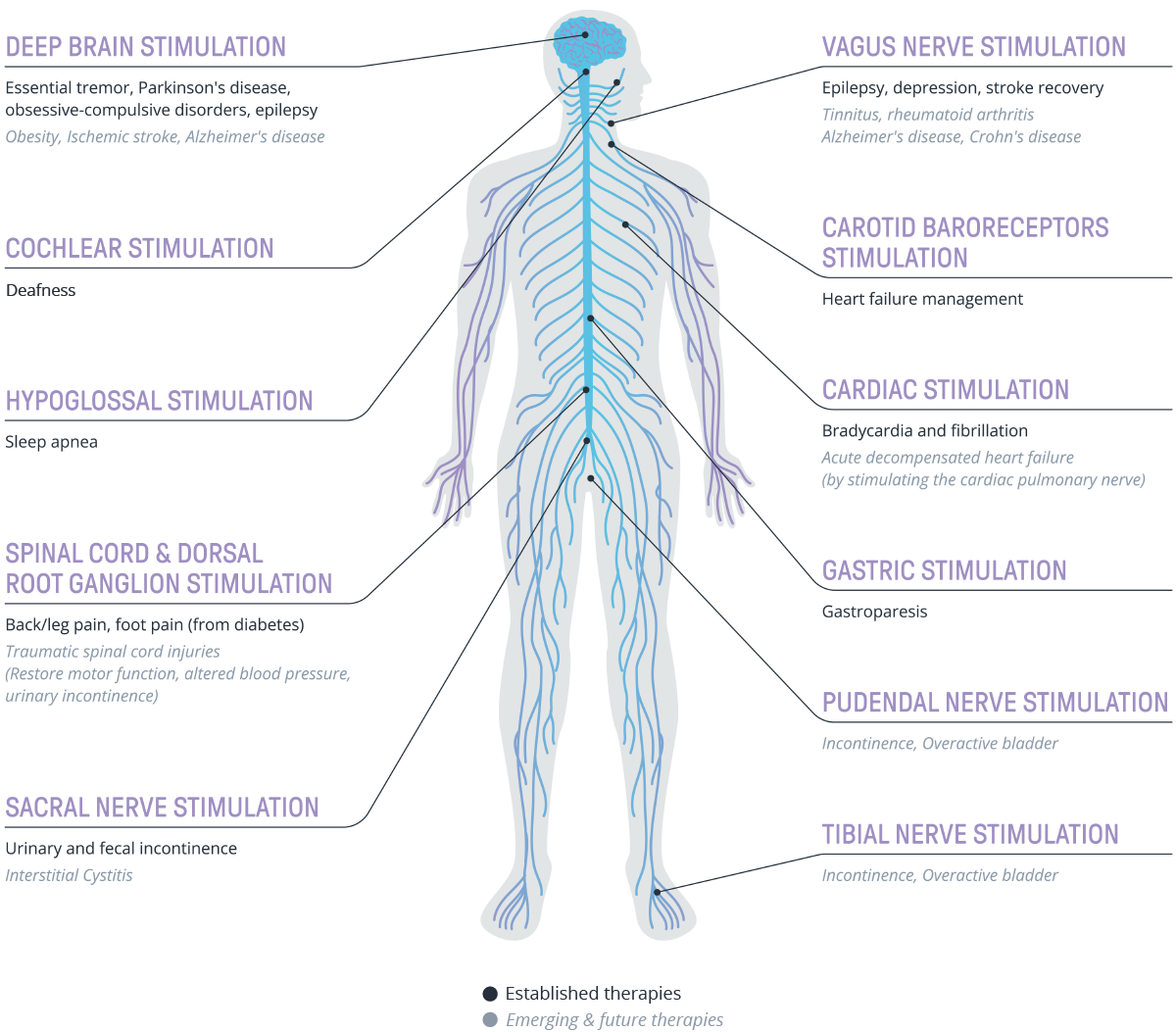

Systemic delivery has long been the mainstay of drug administration, whether via the oral, injectable, inhalable, nasal or another delivery route. There are, of course, many well-documented downsides of systemic delivery, including unintended side effects in locations beyond the drug target and reduced efficacy due to dose safety requirements to reduce those side effects. Targeted drug delivery can address many of those issues1 with targeted intranasal delivery, in particular, having the potential to treat many debilitating conditions, from as yet underserved conditions, such as cluster headaches, through to central nervous system (CNS) conditions such as Alzheimer’s disease. Indeed, there are currently many active studies on therapeutic delivery via this specialised route2. These targeted treatments have the potential to improve the lives of patients, their families and their carers immeasurably.

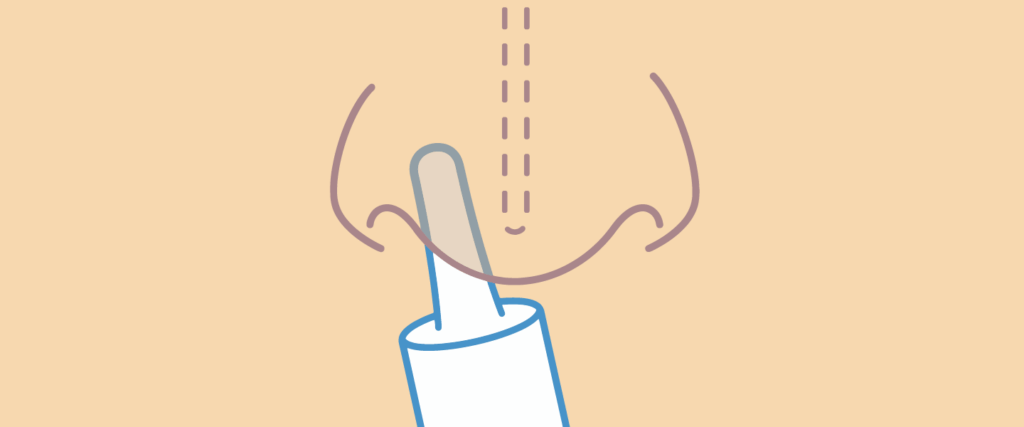

However, the key challenge lies in achieving the delivery of an accurate dose to a precise location within the nasal anatomy. A device that can enable that targeting is intrinsically linked to drug efficacy, meaning that it is necessary to consider device development earlier in the process than usual. In comparison, a drug intended for parenteral delivery has the well-trodden option of using a vial and syringe for administration by a healthcare practitioner during early development phases while proving basic safety and efficacy. A more complex drug delivery system can then be sourced or designed (if required) in parallel, ready for use in Phase III trials as part of a combination product development pathway.

“The key challenge lies in achieving the delivery of an accurate dose to a precise location within the nasal anatomy. A device that can enable that targeting is intrinsically linked to drug efficacy.”

This off-the-shelf-device approach, aimed at reducing the risk and cost associated with early-stage clinical studies, is not an option available to those developing highly targeted intranasal delivery – most of the currently available nasal devices are designed to coat as much of the nasal cavity as possible, making them unsuitable for delivery to a precise area. A nasal device with a broad spray pattern may even lead to the drug not reaching the intended target area at the required dose level.

So, how can a new, bespoke device be developed and made available for the initial Phase I and II trials? These are complex devices that need to be suitably well designed to ensure that patients or clinical professionals can use them during clinical trials to administer the drug accurately and repeatedly to the correct location, often deep in the nasal cavity.

To answer this, a minimum viable product (MVP) prototype device can be designed for the needs of the Phase I and II clinical trials. Designing for use within the controlled setting of a clinical trial and prioritising solely patient safety, spray geometry and usability (relating to holding and positioning the device) at this stage can considerably reduce the effort, cost and time required to reach the clinic. This MVP device will then allow the safety, efficacy and feasibility of the self-administered, targeted intranasal delivery method to be proven during these early clinical trials. The device performance and usability are critical to correctly delivering the drug, so learnings from this MVP device can be used in the further development and refinement of the device for Phase III trials, as well as the future commercial-scale device. Carrying out risk assessments and timely iterative testing (via formative studies) on the usability of the device is crucial; misuse or an inability to use the device could stop the patient from administering the drug to the intended location within the nasal cavity, or even cause harm, ultimately preventing the drug from achieving its intended therapeutic effect. Therefore, usability and human factors engineering must be incorporated into the design and development process from the start.

Defining a usable design

The challenge for the device development team is to successfully incorporate design for usability throughout a “lean” MVP device development process, meaning that a safe, usable device must be produced with reduced cost compared with traditional development processes. This can be achieved by careful adaptations to the typical design for usability process. When applying user-centric design principles, as outlined in ISO 9241-210, four steps should be followed:

- Understand the context of use

- Define the requirements

- Build the design

- Evaluate the design against the requirements.

Although this is not the only relevant ISO standard (others, such as ISO 62366, cover the application of usability engineering to medical devices), ISO 9241-210 provides a set of recommendations and requirements for applying user-centric design principles within design and development activities. These processes help to identify “real” user needs and usability challenges, which can then be used to establish a clearer framework for user interaction and interface design.

Understand the Context of Use

Consideration of the patient, including when and why they are receiving treatment, is essential. For example, if a new targeted nasal delivery device is to replace a healthcare practitioner-administered treatment, it is likely that the patient currently visits a clinic to receive their treatment, disrupting their schedule and placing an additional burden on the healthcare system. A self-administered device will naturally put the patient in control of their treatment and improve their quality of life – as has been witnessed through the advent of self-injection devices. However, targeted nasal delivery relies on the patient not only following the treatment regimen and using the device correctly, but also positioning the device accurately to ensure that the drug is delivered to the precise location intended.

“The best form of information gathering is to consult the patients themselves – they know their needs, and frustrations, better than anyone.”

Another key factor in the design process is predicting how a patient may interpret the device and, therefore, how they would go about using it. This is where the concept of mental models is useful, as it reflects the patient’s perception of how a device works and how to use it based on the patient’s experiences of similar devices. Perception is what a patient sees, hears, touches or smells, which, in turn, triggers mental recall and cognition, which then drives their actions.

The best form of information gathering is to consult the patients themselves – they know their needs, and frustrations, better than anyone. Clinicians and caregivers can provide additional information about patient behaviour and trends based on their experience across a wide range of patients, but their answers should take second place.

Speaking to patients is crucial to building an understanding of the context of use; however, care must be taken with the specific questions asked – they must be suitably phrased to avoid leading patients to give similar answers, but also to gather the information required to guide the device design via user needs. Working with experienced insight researchers and human factors experts can greatly increase the value gleaned from patient interaction throughout the design and development process.

Define the Requirements

Once the context of use is understood, the findings and needs of the patient must be converted from a range of opinions and perceptions into clearly defined requirements. It is essential to align patient needs with requirements in a format that can be validated. Similarly, technical requirements need to be verifiable, while also ensuring a cost-effective and usable device design.

User requirements should drive the technical requirements for the device. Requirements are living documents, so each set of patient interviews will typically lead to updates to the requirements throughout the design process. Equally, unknown parameters in the requirements documents can be used to drive patient interviews that can, in turn, be used to refine the requirements further or provide specific values for the device design team. These documents and patient interviews can then both be iteratively tested and updated as required.

Build the Design

The design stage is the point at which activities can be prioritised to reduce development time and costs by differentiating between a prototype device suitable for first-in-human testing and a fully developed and validated device. Here, the typical process of concept generation followed by down selection (via assessment against device requirements) is used to identify a suitable device design for further development.

Once initial prototype devices are available, engineering testing against the requirements can be performed to provide confidence in the design. Full design verification testing is not required at this stage, but sufficient evidence should be generated in the key areas, including safety and dose delivery performance. Development and evaluation of the important training materials, such as the instructions for use, should be started, but with a lowered risk assessment burden, in the knowledge that there will be clinicians available during initial trials.

“Once initial prototype devices are available, engineering testing against the requirements can be performed to provide confidence in the design.”

Focusing on the requirements of the MVP will accelerate time to clinic by concentrating on safety and usability. This MVP device is equivalent to a syringe and vial or prefilled syringe in injectable development for systemic treatments, so there will be future opportunities to refine the design for Phase III trials and commercial launch. This is an appropriate strategy, as the devices will only be used under supervision at this point. All learnings from the study can then be prioritised and incorporated into the final design as required, according to risks identified.

“Once a final prototype has been developed, it must be evaluated against the design requirements by design review, engineering testing and formative human factors studies.”

Evaluate Against Requirements

Once a final prototype has been developed, it must be evaluated against the design requirements by design review, engineering testing and formative human factors studies. This should incorporate a usability assessment for self-administration and simulate as many real functionalities as possible, including tactile, visual and auditory feedback from the device. This process should prioritise evaluating areas highlighted as high risk during previous activities, but also gather information on any additional learnings relevant to future design updates.

The Future of Targeted Intranasal Devices

The approach discussed here aligns with developing a bespoke prototype device suitable for first-in-human trials for targeted nasal delivery. The success or failure of this strategy depends on the nature of the collaboration between the pharmaceutical partner and the device design engineers, as well as in the experience of the insight researchers and usability engineers. Experience in the process required to develop a usable device is critical to the successful outcome of such a project and will pave the way for bringing a device to market in this new and exciting area of nasal drug delivery. It will be fascinating to see just how many new, life-changing improvements will be made possible by targeted nasal delivery.

References

- Hanson LR, Frey WH 2nd, “Intranasal delivery bypasses the blood-brain barrier to target therapeutic agents to the central nervous system and treat neurodegenerative disease”. BMC Neurosci, 2008, Vol 9(Suppl 3), S5.

- Hallschmid M, “Intranasal Insulin for Alzheimer’s Disease”. CNS Drugs, 2021, Vol 35(1), pp 21–37.